What’s in a name? Ask an Epsom Yeaton.

Norm Yeaton dropped a stack of papers three inches thick, attached by a clip, onto his living room table.The thud said a lot, that an arduous research project by Yeaton – in an attempt to understand the sheer volume of people in Epsom whose last name...

‘We’re just kids’: As lawmakers debate transgender athlete ban, some youth fear a future on the sidelines

Maëlle Jacques has read the articles written about her.“Winner of NH Girls High Jump is Biological Male.”“Transgender girl blasted after dominating New Hampshire girls high jump.”“U.S. ‘Full of Failing Gutless Mothers and Fathers’: High School Boy...

Most Read

Mother of two convicted of negligent homicide in fatal Loudon crash released on parole

Mother of two convicted of negligent homicide in fatal Loudon crash released on parole

Students’ first glimpse of new Allenstown school draws awe

Students’ first glimpse of new Allenstown school draws awe

Pay-by-bag works for most communities, but not Hopkinton

Pay-by-bag works for most communities, but not Hopkinton

‘Bridging the gap’: Phenix Hall pitch to soften downtown height rules moves forward

‘Bridging the gap’: Phenix Hall pitch to soften downtown height rules moves forward

Regal Theater in Concord is closing Thursday

Regal Theater in Concord is closing Thursday

‘We’re just kids’: As lawmakers debate transgender athlete ban, some youth fear a future on the sidelines

‘We’re just kids’: As lawmakers debate transgender athlete ban, some youth fear a future on the sidelines

Editors Picks

Concord martial arts studio builds life skills far beyond combat

Concord martial arts studio builds life skills far beyond combat

Red barn on Warner Road near Concord/Hopkinton line to be preserved

Red barn on Warner Road near Concord/Hopkinton line to be preserved

FAFSA fiasco hits New Hampshire college goers and universities hard

FAFSA fiasco hits New Hampshire college goers and universities hard

Searchable Concord salary database: Top earners include more police, fewer women

Searchable Concord salary database: Top earners include more police, fewer women

Sports

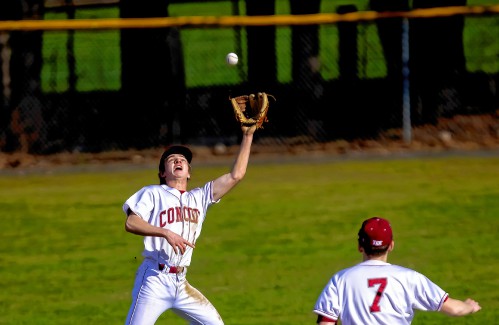

Wednesday’s high schools: Fancher’s 2-run blast leads Concord baseball to victory; plus more baseball, softball, lax and tennis results

BaseballConcord 9, Winnacunnet 8Key players: Concord – Matt Jenness (winning pitcher), Jackson Martin (save), Mitch Coffey (3 hits, 2 runs, RBI), Dawson Fancher (2-run HR, 2 hits, 2 runs, 3 RBI), Brett McDonough (2 hits, 2 RBI), Kaelen Williams (hit,...

Opinion

Opinion: Student power: Confronting collaborators and cowards at home and abroad

Robert Azzi is a photographer and writer who lives in Exeter. His columns are archived at robertazzitheother.substack.com ‘From the beginning of Western speculation about the Orient, the one thing the Orient could not do was to represent itself,”...

Opinion: Adopting the right 306 Rules

Opinion: Adopting the right 306 Rules

Opinion: Bankers have the NH Public Deposit Investment Pool in their sights

Opinion: Bankers have the NH Public Deposit Investment Pool in their sights

Opinion: Proposed height zoning change for Concord’s Main Street

Opinion: Proposed height zoning change for Concord’s Main Street

Politics

Sununu says he’ll support Trump even if he’s convicted

As jury selection begins this week in the hush-money trial of former President Donald Trump, New Hampshire Gov. Chris Sununu says he doesn’t believe many voters view Trump’s criminal indictments, his actions on Jan. 6, 2021, or his election denialism...

NH mayors want more help from state on homelessness prevention funds

NH mayors want more help from state on homelessness prevention funds

Two democrats with parallel views run for same State Senate seat

Two democrats with parallel views run for same State Senate seat

House passes bill removing exceptions to state voter ID law

House passes bill removing exceptions to state voter ID law

League of Women Voters suing over AI robocalls sent in NH

League of Women Voters suing over AI robocalls sent in NH

Arts & Life

From the farm: Spring brings calves, beautiful calves

It’s finally spring, and on Miles Smith Farm, that means baby calves. Spring calves result from careful planning, not by the cows, but by me, the farmer.Since cow gestation is nine months, Ferdie, the Scottish Highland bull, got to schmooze with the...

NH Furniture Masters present new member show this spring

NH Furniture Masters present new member show this spring

Vintage Views: The greatest factory that never was

Vintage Views: The greatest factory that never was

Obituaries

Allen C. Cole

Allen C. Cole

Allan C. Cole Pembroke, NH - Allan C. Cole, 87, of Pembroke, passed away on Wednesday March 27, 2024 at Concord Hospital following a period of declining health. Allan was born on Novembe... remainder of obit for Allen C. Cole

Kirsten Wirth

Kirsten Wirth

Concord, NH - Kirsten Wirth passed from this life peacefully after a brief battle with cancer, with three of her siblings surrounding her with love at her bedside in Concord, NH on March 29... remainder of obit for Kirsten Wirth

Cynthia Grudak

Cynthia Grudak

Hayesville, NC - Cynthia Charles Grudak 84, of Murphy, North Carolina passed away Tuesday, April 9, 2024, in a Clay County care facility. She was the daughter of the late Harold and Louise... remainder of obit for Cynthia Grudak

Kevin Ward Jenkins

Kevin Ward Jenkins

Concord, NH - Kevin Ward Jenkins, 67, died Friday, April 12, 2024 at Concord Hospital, Concord, NH. Born on April 3, 1957 in Concord, NH, Kevin is the son of the late Ward and Charlene ... remainder of obit for Kevin Ward Jenkins

Opinion: America needs systematic change

Opinion: America needs systematic change

Opinion: The eclipse reveals an allegory about the current political life of Congress

Opinion: The eclipse reveals an allegory about the current political life of Congress

On the trail: NH GOP leaders urge ‘decorum and respect’

On the trail: NH GOP leaders urge ‘decorum and respect’

From a book to bread, Merrimack Valley High School students show off their senior projects

From a book to bread, Merrimack Valley High School students show off their senior projects

Vandals hit mausoleum of Tilton's namesake

Vandals hit mausoleum of Tilton's namesake

Beekeepers, farmers square off in NH Senate committee hearing

Beekeepers, farmers square off in NH Senate committee hearing

Gallery: Forty-mile gravel bicycle race draws racers from all over the region

Gallery: Forty-mile gravel bicycle race draws racers from all over the region

The fraught path forward for cannabis legalization, explained

The fraught path forward for cannabis legalization, explained

Softball: Maddy Wachter Ks 12, Concord holds off Winnacunnet in 2023 championship rematch

Softball: Maddy Wachter Ks 12, Concord holds off Winnacunnet in 2023 championship rematch High schools: Tuesday’s track, baseball, softball, lacrosse and tennis results

High schools: Tuesday’s track, baseball, softball, lacrosse and tennis results Boys’ lacrosse: With a different level of energy and focus, MV feels primed for success

Boys’ lacrosse: With a different level of energy and focus, MV feels primed for success Baseball: Concord makes eight errors but shows reasons for optimism in wild extra-inning loss

Baseball: Concord makes eight errors but shows reasons for optimism in wild extra-inning loss Opinion: Being and becoming: A good doctor in the age of artificial intelligence

Opinion: Being and becoming: A good doctor in the age of artificial intelligence Sunapee Kearsarge Intercommunity Theater presents ‘Olympus On My Mind’ in April

Sunapee Kearsarge Intercommunity Theater presents ‘Olympus On My Mind’ in April Inspired by Robert Frost, New Hampshire Poet Laureate Jennifer Militello has achieved her childhood dreams

Inspired by Robert Frost, New Hampshire Poet Laureate Jennifer Militello has achieved her childhood dreams