Hopkinton’s Ben Normand to represent USA at United World Games Basketball

Hopkinton High junior Ben Normand will become the first New Hampshire basketball player to represent PhD Hoops, one of the United States teams in the United World Games.

By all appearances, Canadians are leery of coming to NH

Charyl Reardon hoped this would be the year.

Most Read

Study finds recyclables valued in millions of dollars tossed in New Hampshire’s waste stream

Study finds recyclables valued in millions of dollars tossed in New Hampshire’s waste stream

Helen Hanks resigns as Department of Corrections commissioner

Helen Hanks resigns as Department of Corrections commissioner

As Canadian travel to the U.S. falls, North Country businesses are eyeing this Victoria Day weekend to predict impacts in New Hampshire

As Canadian travel to the U.S. falls, North Country businesses are eyeing this Victoria Day weekend to predict impacts in New Hampshire

‘I thought we had some more time’ – Coping with the murder-suicide of a young Pembroke mother and son

‘I thought we had some more time’ – Coping with the murder-suicide of a young Pembroke mother and son

Owners of Lewis Farm prepare to bring back agritourism after long dispute with city of Concord

Owners of Lewis Farm prepare to bring back agritourism after long dispute with city of Concord

‘It's how we fight back’: Youth demonstrate at “No Voice Too Small” rally

‘It's how we fight back’: Youth demonstrate at “No Voice Too Small” rally

Editors Picks

The Monitor’s guide to the New Hampshire legislature

The Monitor’s guide to the New Hampshire legislature

One year after UNH protest, new police body camera footage casts doubt on assault charges against students

One year after UNH protest, new police body camera footage casts doubt on assault charges against students

‘It’s always there’: 50 years after Vietnam War’s end, a Concord veteran recalls his work to honor those who fought

‘It’s always there’: 50 years after Vietnam War’s end, a Concord veteran recalls his work to honor those who fought

‘We honor your death’ – Arranging services for those who die while homeless in Concord

‘We honor your death’ – Arranging services for those who die while homeless in Concord

Sports

High schools: Belmont’s Divers pitches perfect game; Monday’s baseball, softball, lacrosse and tennis results

Belmont 24, Berlin 0, 5 inn.

Opinion

Opinion: In the fight to stop sexual violence, can polio hold the solutions?

Molly McHugh is the Director of Communications at No Means No Worldwide, an organization dedicated to ending sexual violence. She lives in Orford.

Opinion: Where are the permanent solutions for a more stable budget?

Opinion: Where are the permanent solutions for a more stable budget?

Opinion: My memories of Vietnam 50 years later

Opinion: My memories of Vietnam 50 years later

Opinion: Concord officials: Can we sit and talk?

Opinion: Concord officials: Can we sit and talk?

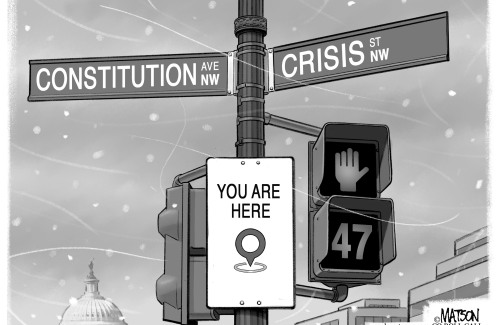

Opinion: Trump versus the U.S. Constitution

Opinion: Trump versus the U.S. Constitution

Your Daily Puzzles

An approachable redesign to a classic. Explore our "hints."

A quick daily flip. Finally, someone cracked the code on digital jigsaw puzzles.

Chess but with chaos: Every day is a unique, wacky board.

Word search but as a strategy game. Clearing the board feels really good.

Align the letters in just the right way to spell a word. And then more words.

Politics

‘A wild accusation’: House votes to nix Child Advocate after Rep. suggests legislative interference

Rosemarie Rung thinks of Elijah Lewis often.

Sununu decides he won’t run for Senate despite praise from Trump

Sununu decides he won’t run for Senate despite praise from Trump

Arts & Life

Young Professional of the Month Katie Duncan shares about creativity, community, connection

Meet Katie Duncan, Membership Manager and Educational Outreach Coordinator at the Capitol Center for the Arts. The 35-year old Concord resident’s passion for the arts and the Concord community shines through her work. From theater stages to local lakes, Katie shares how growing up in Greater Concord shaped her path—and why she’s dedicated to giving back.

Tiny Tapestry sale at Red River Theaters raising money for Concord Coalition to End Homelessness

Tiny Tapestry sale at Red River Theaters raising money for Concord Coalition to End Homelessness

Bowling for a cause: Angelman Syndrome Fundraiser coming to Boutwell’s

Bowling for a cause: Angelman Syndrome Fundraiser coming to Boutwell’s

Beautify Allenstown hosting community cleanup day

Beautify Allenstown hosting community cleanup day

Donating “The Bibliophile”

Donating “The Bibliophile”

Obituaries

Timothy Harkness

Timothy Harkness

Lakeland, FL - Tim Harkness, age 61, of Lakeland, FL passed away peacefully on April 30, 2025. He was born on July 30, 1963 in Vermont and grew up in Epsom, NH. He had moved to Florida in 2018. He had always loved cars and had multiple ... remainder of obit for Timothy Harkness

Cody Chamberlain

Cody Chamberlain

Franklin, NH - It is with great sadness that we announce the unexpected passing of Cody James Chamberlain on May 17, 2025, at the age of 28 due to a motorcycle accident. Cody was born on July 29, 1996, and was the beloved son of Win... remainder of obit for Cody Chamberlain

Alan Kanegsberg

Alan Kanegsberg

Dedham, MA - Alan Kanegsberg (March 6, 1940) passed away peacefully at 85 (May 17, 2025). Alan leaves his devoted wife of 63 years, Helaine (Rosenberg), sons Howard and Philip, beloved grandson Torston, and sister Samantha K. Burkey. Al... remainder of obit for Alan Kanegsberg

Phyllis R. Kelley

Phyllis R. Kelley

Gilmanton, NH - Phyllis R. Kelley fondly called Dolly by family and friends died May 4, 2025 just one day shy of her 101 Birthday, at Golden View Health Care Center, Meredith, NH following a brief illness. Phyllis was born in Pittsf... remainder of obit for Phyllis R. Kelley

Catherine Masterson named next superintendent of Merrimack Valley and Andover starting in 2026

Catherine Masterson named next superintendent of Merrimack Valley and Andover starting in 2026

How did we get here? – Ahead of Thursday hearing, a timeline of the Beaver Meadow golf clubhouse

How did we get here? – Ahead of Thursday hearing, a timeline of the Beaver Meadow golf clubhouse

‘Construction issues’ halt work on new psychiatric hospital in Concord

‘Construction issues’ halt work on new psychiatric hospital in Concord

Opinion: Unfair taxes, unfair schools: The New Hampshire way

Opinion: Unfair taxes, unfair schools: The New Hampshire way

Remembering Sarah Kinter, descendant of Nathaniel Peabody Rodgers, “Friend of the Slave”

Remembering Sarah Kinter, descendant of Nathaniel Peabody Rodgers, “Friend of the Slave”

High schools: Freitas 1-hitter leads Hopkinton softball to shutout; Tuesday’s baseball, lax, tennis and track results

High schools: Freitas 1-hitter leads Hopkinton softball to shutout; Tuesday’s baseball, lax, tennis and track results

Bakery in New Hampshire wins in free speech case over a pastry shop painting

Bakery in New Hampshire wins in free speech case over a pastry shop painting

High schools: Pelletier scores 100th goal, leads Concord girls’ lax to first win; baseball, softball, boys’ lacrosse and track results from this weekend

High schools: Pelletier scores 100th goal, leads Concord girls’ lax to first win; baseball, softball, boys’ lacrosse and track results from this weekend Five former Concord Crush girls at St. Paul’s are soon to leave the nest to play NCAA Women’s Lacrosse

Five former Concord Crush girls at St. Paul’s are soon to leave the nest to play NCAA Women’s Lacrosse Boys’ tennis: Growing the sport, fun and pizza for a tight-knit Concord team on Senior Night

Boys’ tennis: Growing the sport, fun and pizza for a tight-knit Concord team on Senior Night High schools: Concord girls win elite Merrimack Invitational, MV track sweeps senior day, Winnisquam’s Caruso wins 175th career victory, more results from Thursday

High schools: Concord girls win elite Merrimack Invitational, MV track sweeps senior day, Winnisquam’s Caruso wins 175th career victory, more results from Thursday Town elections offer preview of citizenship voting rules being considered nationwide

Town elections offer preview of citizenship voting rules being considered nationwide Medical aid in dying, education funding, transgender issues: What to look for in the State House this week

Medical aid in dying, education funding, transgender issues: What to look for in the State House this week On the Trail: Shaheen’s retirement sparks a competitive NH Senate race

On the Trail: Shaheen’s retirement sparks a competitive NH Senate race